- Google Cloud

- :

- Articles & Information

- :

- Cloud Product Articles

- :

- Intrusion detection in Apigee hybrid - Part 1

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The big picture

This series of 3 articles - co-authored with my colleague Nicola Cardace - discusses the usage of an Intrusion Detection System (IDS) with the Apigee hybrid runtime and Google Cloud Monitoring and Logging.

The solution presented in these articles is specific to Google Cloud Platform (GCP) and Google Kubernetes Engine (GKE).

In the first article, we present how to configure network related infrastructure, as well as packet mirroring of the hybrid runtime internal traffic

In the second article, we present the installation of Snort, an open source IDS.

At the end of the second article, we are able to generate API traffic and trigger basic Snort rules and execute a packet capture of the internal traffic between the Apigee components.

In the third article, we install a logging agent (fluentd) on the IDS Virtual Machine (VM) in order to push logs from the IDS to Cloud Logging. Finally, we create an alerting policy on Google Cloud Monitoring in order to see alerts directly on the GCP console.

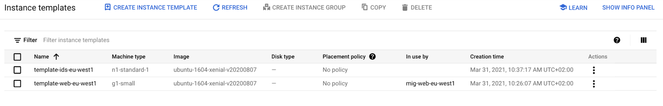

The big picture of the solution is presented on the following picture:

The API traffic on the Apigee hybrid runtime is mirrored to an IDS VM via an Internal Load Balancer (ILB). Packets are analyzed by the IDS and once an intrusion is detected, logs are sent to Cloud Logging/Monitoring: the alerting capabilities of GCP are used in this case (log metrics).

Important:

- In the following examples, we consider that an Apigee hybrid runtime has already been installed on a GKE cluster

- The Apigee hybrid runtime uses a specific subnet (apigee-subnet-eu), as does the IDS (apigee-subnet-eu-ids) configurations of these subnets are detailed in the first article

IDS vs IPS

Before going further, let’s discuss differences between IDS and IPS...

- Intrusion Detection System: An IDS is designed to detect a potential incident, generate an alert, and do nothing to prevent the incident from occurring. While this may seem inferior to an IPS, it may be a good solution for systems with high availability requirements, such as industrial control systems (ICS) and other critical infrastructure. For these systems, the most important concern is that the systems continue running, and blocking suspicious traffic may negatively impact their operations

- Intrusion Prevention System: An IPS, on the other hand, is designed to take action to block anything that it believes to be a threat to the protected system. An IPS is ideal for environments where any intrusion could cause significant damage, such as databases containing sensitive data

Why Intrusion Detection with Apigee hybrid?

Here is a non-exhaustive list of use cases explaining why a joint use of Apigee hybrid and an IDS is beneficial:

- You want to be alerted if an API developer configures a target endpoint or a service callout URL that is not allowed

- You want to be alerted on any egress traffic reaching botnets, C&C servers and other security sensitive destinations

- You want to be alerted about outgoing calls to certain geographies

- You want to be alerted when obsolete TLS versions are used on the ingress of your hybrid runtime

Security alerting use cases

Here are some security alerting use cases:

- Alerting when outgoing destinations/ports from within the cluster are outside an allow-list

- Alerting on any egress IP traffic reaching botnets, C&C servers as indication of a malware infections, other security sensitive destinations

- Maxmind geographic resolution of IP location

- Alert on obsolete TLS versions being used on the ingress/southbound due to lack of hardening

- Checking TCP/UDP port numbers used within the cluster

- Troubleshooting TLS sessions & connectivity

Step1: Set VPC, subnets and firewall rules

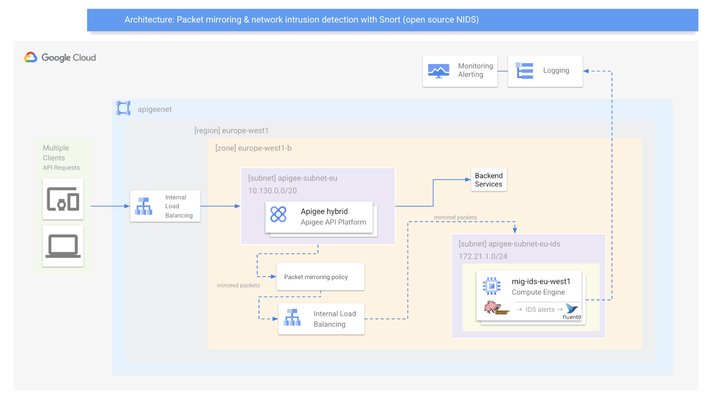

As a VPC network, we do not automatically want to use the default VPC provided by GCP. This is the reason why we delete it here and create a specific network and subnetworks for the purpose of the article. The region we choose for the subnetworks is europe-west1 but you can choose any region of your convenience.

Create a custom VPC: apigeenet

export REGION=europe-west1

export ZONE=europe-west1-b

gcloud config set project [PROJECT_ID]

export PROJECT_ID=$(gcloud config get-value project)

gcloud compute networks create apigeenet --project=$PROJECT_ID --subnet-mode=custom

Create a custom subnet: apigee-subnet-eu

gcloud compute networks subnets create apigee-subnet-eu --project=$PROJECT_ID --range=10.130.0.0/20 --network=apigeenet --region=$REGION

At this point of the article, an Apigee hybrid runtime has been installed using the apigeenet network and apigee-subnet-eu subnetwork.

Instances on this network will not be reachable until firewall rules are created.

We allow all internal traffic between instances as well as SSH, RDP, and ICMP by creating the following two firewall rules:

Rule#1:

gcloud compute firewall-rules create apigeenet-allow-tcp-udp --network apigeenet --allow tcp,udp --source-ranges 0.0.0.0/0gcloud compute firewall-rules create apigeenet-allow-ssh-rdp-icmp --network apigeenet --allow tcp:22,tcp:3389,icmpgcloud compute firewall-rules create apigeenet-allow-ssh-rdp-icmp --network apigeenet --allow tcp:22,tcp:3389,icmp

Rule#2:

gcloud compute firewall-rules create apigeenet-allow-ssh-rdp-icmp --network apigeenet --allow tcp:22,tcp:3389,icmp

gcloud compute networks subnets create apigee-subnet-eu-ids \

--range=172.16.1.0/24 \

--network=apigeenet \

--region=$REGION

Step2: Create firewall rules for IDS VM and IAP (Identity Aware Proxy) and Cloud NAT

- Rule#3 allows the IDS to receive ALL traffic from ALL sources. Be careful NOT to give the IDS VM a public IP in the later sections

- Rule#4 allows the GCP IAP Proxy IP range TCP port 22 to ALL VMs, enabling you to ssh into the VMs via the Cloud Console (see here)

Rule#3:

gcloud compute firewall-rules create fw-apigeenet-ids-allow-all --direction=INGRESS --priority=1000 --network=apigeenet --action=ALLOW --rules=all --source-ranges=0.0.0.0/0 --target-tags=ids

Rule#4:

gcloud compute firewall-rules create fw-apigeenet-iapproxy-allow-ssh-icmp --direction=INGRESS --priority=1000 --network=apigeenet --action=ALLOW --rules=tcp:22,icmp --source-ranges=35.235.240.0/20

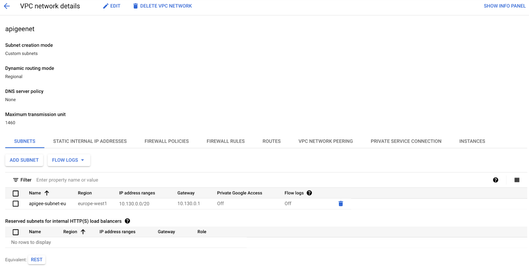

Create a CloudRouter

As a prerequisite for Cloud NAT, a Cloud Router must first be configured in the respective region:

gcloud compute routers create router-apigeenet-nat-euwest1 --region=$REGION --network=apigeenet

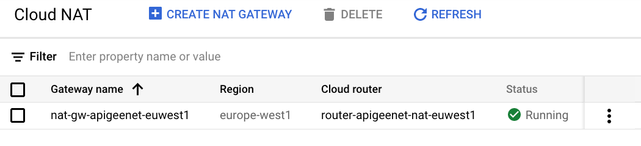

Here is an overview of the Cloud Router:

Configure a Cloud NAT

To provide Internet access to VMs without a public IP, a Cloud NAT must be created in the respective region:

gcloud compute routers nats create nat-gw-apigeenet-euwest1 --router=router-apigeenet-nat-euwest1 --router-region=$REGION --auto-allocate-nat-external-ips --nat-all-subnet-ip-ranges

Here is an overview of the Cloud NAT:

Step3: Create Virtual Machines

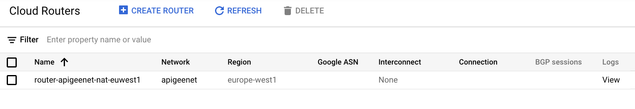

Create an Instance Template for the IDS VM.

This template prepares an Ubuntu server in in the target region, and with no public IP:

gcloud compute instance-templates create template-ids-eu-west1 \

--region=$REGION \

--network=apigeenet \

--no-address \

--subnet=apigee-subnet-eu-ids \

--image=ubuntu-1604-xenial-v20200807 \

--image-project=ubuntu-os-cloud \

--tags=ids,webserver \

--metadata=startup-script='#! /bin/bash

apt-get update

apt-get install apache2 -y

vm_hostname="$(curl -H "Metadata-Flavor:Google" \

http://169.254.169.254/computeMetadata/v1/instance/name)"

echo "Page served from: $vm_hostname" | \

tee /var/www/html/index.html

systemctl restart apache2'

Create a Managed Instance Group for the IDS VM

This command uses the Instance Template in the previous step to create 1 VM to be configured as our IDS. The installation of Snort will be addressed in a later section.

gcloud compute instance-groups managed create mig-ids-eu-west1 \

--template=template-ids-eu-west1 \

--size=1 \

--zone=$ZONE

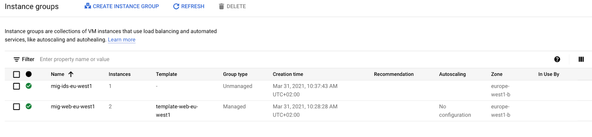

Here is an overview of the instance template:

Here is an overview of the instance group:

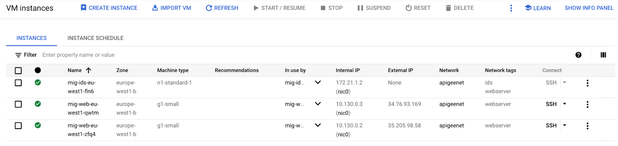

Here is an overview of the instance

Step4: Create an Internal Load Balancer (ILB)

Packet Mirroring uses an internal load balancer (ILB) to forward mirrored traffic to a group of collectors. In this case, the collector group contains a single VM.

Create a basic health check for the backend services:

gcloud compute health-checks create tcp hc-tcp-80 --port 80

Create a backend service group to be used for an ILB:

gcloud compute backend-services create be-ids-eu-west1 \

--load-balancing-scheme=INTERNAL \

--health-checks=hc-tcp-80 \

--network=apigeenet \

--protocol=TCP \

--region=$REGION

Add the created IDS managed instance group into the backend service group created in the previous step:

gcloud compute backend-services add-backend be-ids-eu-west1 \

--instance-group=mig-ids-eu-west1 \

--instance-group-zone=$ZONE \

--region=$REGION

Create a front end forwarding rule to act as the collection endpoint:

gcloud compute forwarding-rules create ilb-ids-ilb-eu-west1 \

--load-balancing-scheme=INTERNAL \

--backend-service be-ids-eu-west1 \

--is-mirroring-collector \

--network=apigeenet \

--region=$REGION \

--subnet=apigee-subnet-eu-ids \

--ip-protocol=TCP \

--ports=all

Note: even though TCP is listed as the protocol, the actual type of mirrored traffic will be configured in the Packet Mirror Policy in a future section.

Step5: Configure Packet Mirror Policy

Setting up the Packet Mirror policy can be completed in one simple command.

In this command, you specify all 5 attributes mentioned in the Packet Mirroring Description section.

- Region

- VPC Network(s)

- Mirrored Source(s)

- Collector (destination)

- Mirrored traffic (filter)

You may notice that there is no mention of "mirrored traffic". This is because the policy will be configured to mirror "ALL" traffic and no filter is needed. The policy will mirror both ingress and egress traffic and forward it to the Snort IDS device, which is part of the collector ILB.

Configure Packet Mirroring policy:

gcloud compute packet-mirrorings create mirror-web \

--collector-ilb=ilb-ids-ilb-eu-west1 \

--network=apigeenet \

--mirrored-subnets=apigee-subnet-eu \

--region=$REGION

At this point, Packet Mirroring has been configured.

The next article presents the IDS (Snort) installation as well as some basic tests.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Great article @joel_gauci

Can we use the same subnet for both apigee and IDS? Any reason why you created one specific subnet for each of them?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Thiago,

It is better to isolate both traffics as the packets that are mirrored are sent to the IDS VMs on a dedicated network.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@joel_gauci Got it. That makes total sense.

Twitter

Twitter